Your Disk Is Full. Now What?

You know the feeling. df -h returns something alarming and you’re staring at a filesystem at 97% and your brain immediately goes: “How did this happen. Who did this.”

Then you open a dozen tabs about du flags and 45 minutes later you’ve found your culprit, but you’ve also aged three years.

Here’s the thing: du and df work fine. They’ve worked fine since before you were born (probably). But once you’ve used dust for 10 minutes you’ll never go back to squinting at du output trying to mentally sort a wall of kilobyte counts.

This is the 2026 companion to the classic du/ncdu guide. That one covers the fundamentals. This one covers what to reach for when you want the fundamentals to stop making you feel bad.

The Old Standbys (Still Pull Their Weight)

Before we talk about shiny Rust tools, a word of respect for the originals.

df -h — your first stop. Mount points, usage percentages, filesystem overview. Nothing replaces it for a quick “which partition is screaming.”

df -hdu -sh * | sort -rh | head -20 — the classic one-liner. Drop this in any directory to see the 20 biggest entries. It works everywhere, no install required, and it’ll be there when you SSH into a weird VPS at 2 AM.

du -sh * | sort -rh | head -20ncdu — if you’re on a remote server and need interactive navigation, ncdu is still excellent. Available in basically every package manager, runs in a terminal, lets you descend into directories and delete files without leaving the TUI. Reliable, boring, works great.

None of these are going anywhere. But they have rough edges that the newer tools sand down.

duf — df With Actual Readability

duf is a df replacement that respects your eyeballs. Colorful output grouped by filesystem type, clean columns for Used/Free/Total, and it runs on macOS, Linux, and Windows.

# Installapt install duf # Debian/Ubuntubrew install duf # macOS# Or grab a binary from github.com/muesli/duf

dufYou get mounted filesystems grouped into “Devices”, “Special”, and “Network” sections, with percentage bars. At a glance you know which mount is the problem, what type it is, and whether you should care about it.

It’s not fancy, it’s just df done right.

dust — du With a Brain

dust is the one that converts people. Run it in any directory and you get a treemap-style bar chart, automatically sized to your terminal, showing exactly where the space is going — no mental math required.

# Installcargo install du-dust# Or: brew install dust / apt install dust (newer distros)

dustdust -d 3 # limit depth to 3 levelsdust /var/log # specific pathThe output shows percentage bars next to each directory. Big directories jump out visually. It scans fast, handles large trees without choking, and the output is actually readable without piping through sort and head.

This is the one you’ll alias to du within a week.

dua-cli — ncdu’s Faster Cousin

dua-cli (dua) is an interactive TUI disk analyzer, similar to ncdu but written in Rust and significantly faster on large filesystems with lots of inodes.

cargo install dua-cli# Or: brew install dua-cli

dua i # interactive modedua # non-interactive size summaryIn interactive mode: navigate with arrow keys, press d to mark entries for deletion, confirm to remove. The parallel scanning means you’re not waiting on it — on an NVMe with a full /var it’s noticeably snappier than ncdu.

If you live on big servers with millions of files, this is your ncdu upgrade.

fclones — Find and Kill Duplicate Files

This one’s in a different category. fclones doesn’t show you directory sizes — it finds duplicate files using fast hashing, even across millions of files.

cargo install fclones# Or grab a binary from github.com/pkolaczk/fclones

fclones group . # find all duplicates in current dirfclones group . | fclones remove # find and remove duplicates (keeps one copy)fclones group . --min-size 1MB # only care about files over 1MBReal-world scenarios where this earns its keep:

- Docker volumes and build caches — you’d be shocked how many identical layers end up duplicated in weird places

- Photo libraries — especially after consolidating backups from multiple sources

- node_modules across projects — don’t ask how much space this can reclaim, just run it

The group | remove pipeline is idiomatic and safe — it keeps one copy of each duplicate group and removes the rest.

fd — Finding Large Files Fast

Not a disk analyzer, but worth having in the triage toolkit. fd is a faster find replacement, and it makes locating large files simple.

apt install fd-find # Debian/Ubuntu (binary is fdfind)brew install fd # macOS

fd . --type f --size +100m # files over 100MBfd . --type f --size +500m /var # big files in /var specificallyCompared to find . -size +100M -type f, the fd version is faster on large directories and the syntax is less hostile to human memory.

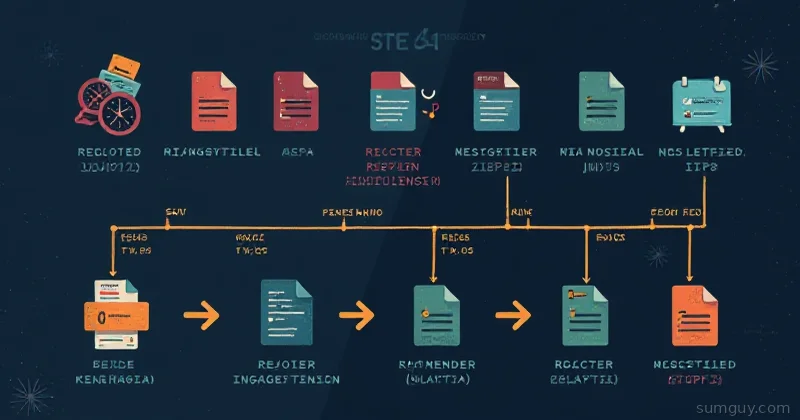

Comparison Table

| Tool | Language | Best For | Install |

|---|---|---|---|

| df | C (coreutils) | Mount overview | Built-in |

| du | C (coreutils) | Directory sizes | Built-in |

| ncdu | C | Interactive TUI on remote servers | apt install ncdu |

| duf | Go | Pretty df replacement | apt install duf |

| dust | Rust | Visual directory breakdown | cargo / package manager |

| dua-cli | Rust | Fast interactive TUI | cargo / package manager |

| fclones | Rust | Duplicate file detection | cargo / binary |

| fd | Rust | Finding large/specific files | apt install fd-find |

Practical Workflows

”My disk is full, what happened?”

duf # which filesystem is fulldust / # top-level breakdowndust /var # drill into the noisy onedust /var/log # and againThree commands. You’ll have your answer in under a minute.

”Find and remove duplicates in my backup drive”

# Dry run first — see what would be removedfclones group /mnt/backup --min-size 1MB

# When you're confident:fclones group /mnt/backup --min-size 1MB | fclones removeAlways do the dry run first. fclones is safe but your future self will thank you for the sanity check.

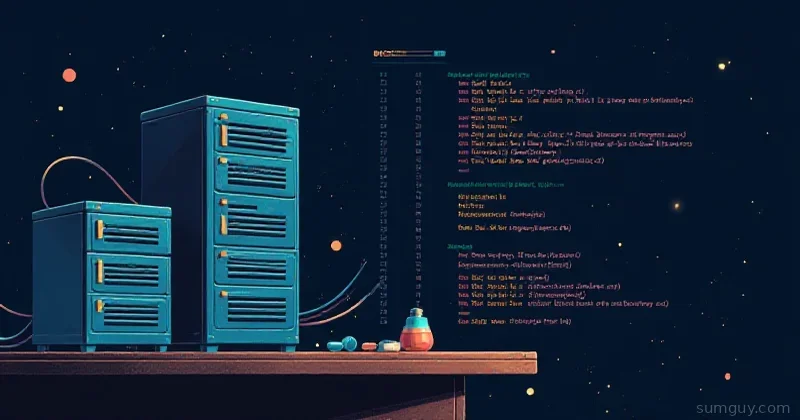

Weekly Disk Report (Bash Script)

#!/bin/bashecho "=== Disk Usage Report: $(date) ==="echo ""echo "--- Filesystem Overview ---"duf --only localecho ""echo "--- Top 10 Dirs in /home ---"dust -d 2 -n 10 /homeecho ""echo "--- Top 10 Dirs in /var ---"dust -d 2 -n 10 /varDrop this in a cron job, pipe it to a Slack webhook or just to a log file. Boring and useful — the best kind of automation.

The Honest Take

You don’t need all of these. If you only install two:

- dust — for understanding where space is going locally

- duf — for a readable mount overview

Those two handle 90% of disk investigation. Add fclones when you’re fighting duplicate bloat and dua-cli if you’re on a server where you need to actually delete things interactively.

The old tools work. These tools work better. Your 2 AM self — staring at a full /var/log and wondering why the site is down — will appreciate having something readable to look at.