The Question Everyone Asks

“I have a 24GB GPU. Can I run Llama 2 70B?”

The answer depends on quantization, context window, and batch size. And people always guess wrong.

The Basic Formula

Here’s what you actually need:

VRAM needed = (Model parameters × bytes per parameter) + KV cache + activation overheadThe first part is straightforward. A model’s weights are fixed—they’re just numbers stored on disk. But how many bytes depends on precision:

- F16 (half precision): 2 bytes per parameter

- Q8 (8-bit quantization): 1 byte per parameter

- Q5 (5-bit quantization): 0.625 bytes per parameter

- Q4_K_M (4-bit): 0.5 bytes per parameter

Example: Llama 2 7B, different quantizations

F16 (full precision):7B × 2 bytes = 14 GB

Q8 (8-bit):7B × 1 byte = 7 GB

Q4_K_M (4-bit):7B × 0.5 bytes ≈ 3.5 GBThis is model weights alone. The rest is where people stumble.

Quantization Formats: The Trade-offs

If you’re using GGUF format (llama.cpp, Ollama, LM Studio), you have quantization options:

| Format | Bytes/param | Quality loss | Speed penalty | When to use |

|---|---|---|---|---|

| F16 | 2.0 | None | Baseline | RTX 4090 with headroom |

| Q8 | 1.0 | Negligible | Minimal | 16GB+ GPUs, quality-first |

| Q5_K_M | 0.625 | Slight | None | Sweet spot for most folks |

| Q4_K_M | 0.5 | Noticeable but acceptable | None | Budget GPUs, still solid |

| Q3_K_M | 0.375 | More noticeable | None | Squeeze large models onto 8GB |

Real talk: Q4_K_M is where 90% of people land. It’s a 75% compression ratio, inference is still fast, and quality is fine for chat.

The Overhead Gotcha

When you load a model for inference, the GPU needs extra memory for:

1. KV cache (conversation history cached in VRAM)

Every token in your context history gets cached as key-value pairs. The formula:

KV cache = 2 × batch_size × context_length × num_layers × hidden_dim × precision_bytesFor Llama 2 7B with 4K context, batch size 1, F16: roughly 500MB–600MB. For Llama 2 70B with 8K context, batch size 1, F16: roughly 5–6GB.

2. Activation buffers (intermediate computations during inference)

Running forward passes generates temporary tensors. Budget 10–20% of model weight size.

3. Framework overhead (llama.cpp, vLLM, Ollama reserve buffers)

Another 5–10%.

Real-world total overhead: 15–30% extra on top of model weights. Always add it.

Llama 2 7B Q4_K_M example: Model weights: 3.5 GB KV cache (4K): 0.6 GB Activations: 0.5 GB (15% of model) Framework: 0.4 GB ───────────────────────── Total: 5.0 GBContext Window = Cache Explosion

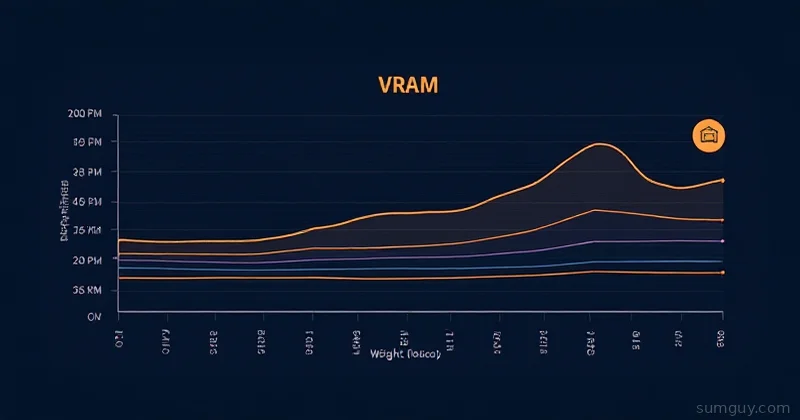

This is the killer variable. Larger context windows = massive KV cache.

Llama 2 7B Q4_K_M, batch size 1:

4K context: 3.5 GB model + 0.6 GB cache = 4.1 GB8K context: 3.5 GB model + 1.2 GB cache = 4.7 GB16K context: 3.5 GB model + 2.4 GB cache = 5.9 GB32K context: 3.5 GB model + 4.8 GB cache = 8.3 GBIf you’re tight on VRAM, limit context per request:

# Ollama with 2K context instead of 4Kcurl http://localhost:11434/api/generate -d '{ "model": "mistral", "prompt": "What is Docker?", "num_ctx": 2048, "stream": false}'Reference Table: Common Models + Quantizations

Model F16 Q8 Q5_K_M Q4_K_M Notes──────────────── ──── ── ────── ────── ──────────────────Mistral 7B 14 GB 7 GB 4.5 GB 3.5 GB Fastest 7BLlama 2 7B 14 GB 7 GB 4.5 GB 3.2 GB BaselineLlama 3 8B 16 GB 8 GB 5 GB 4 GB Better than Llama 2Llama 2 13B 26 GB 13 GB 8 GB 6.5 GB Still single-GPULlama 3 70B 140 GB 70 GB 45 GB 35 GB Needs A100/RTX 6000Mistral 48x8B ~90 GB 45 GB ~30 GB ~22 GB MoE, sparseClaude 3 Sonnet ~50 GB ~25 GB ~16 GB ~12 GB Closed, estimates onlyAdd 1–3GB to these for KV cache overhead depending on context window and batch size.

Batch Size Multiplier

Processing multiple requests in parallel multiplies KV cache:

Llama 2 7B Q4_K_M, 4K context:

Batch 1: 0.6 GB cacheBatch 4: 2.4 GB cacheBatch 8: 4.8 GB cacheSingle user? Batch 1 is fine. Running an inference API with 10 concurrent users? Budget for batch 4–8.

Quick Calculator

def vram_needed(model_params_b, quantization, context_window, batch_size=1): """Estimate VRAM in GB.""" bits = {'f16': 16, 'q8': 8, 'q5': 5, 'q4': 4}

# Model weights model_gb = (model_params_b * 1e9 * bits[quantization]) / (8 * 1e9)

# KV cache (simplified: ~150 bytes per token per billion params) cache_gb = (context_window * batch_size * model_params_b * 150) / 1e9

# Overhead (20%) overhead_gb = model_gb * 0.20

total = model_gb + cache_gb + overhead_gb return round(total, 2)

# Examplesprint(vram_needed(7, 'q4', 4096, batch_size=1)) # 4.7 GBprint(vram_needed(70, 'q4', 4096, batch_size=1)) # 43.2 GBprint(vram_needed(13, 'q5', 8192, batch_size=4)) # 15.8 GBCheck Your Available VRAM

Before you start:

# NVIDIA GPUsnvidia-smi --query-gpu=memory.total,memory.free --format=csv,nocheck

# AMD GPUs (ROCm)rocm-smi --showproductname --showmeminfoFallback: CPU Offloading

Don’t have enough VRAM? Offload layers to CPU (slower, but works):

# Ollama: use num_gpu parameterOLLAMA_NUM_GPU=10 ollama run mistral

# llama.cpp: use -ngl (number of GPU layers)./main -m model.gguf -ngl 20 -p "Your prompt"GPU layers run fast. CPU layers run ~10x slower. Mixed is a compromise.

The Decision Tree

- 8GB GPU? Mistral 7B Q4, 4K context, batch 1.

- 16GB GPU? Llama 2 13B Q4, or 7B Q5 with room to breathe.

- 24GB GPU? Llama 2 13B Q5, 70B Q4 with 4K context, or Mistral 48x8B sparse offloading.

- 40GB+ GPU? You have real options. Test your actual workload.

The Hard Truth

People underestimate overhead by 20–30%.

If you calculate “your model needs 4.5 GB,” don’t load it on a 6GB card. When inference spikes with activations, you’ll get OOM errors mid-generation.

Run the math, subtract 1GB as a safety buffer, and you’ll actually know what fits.