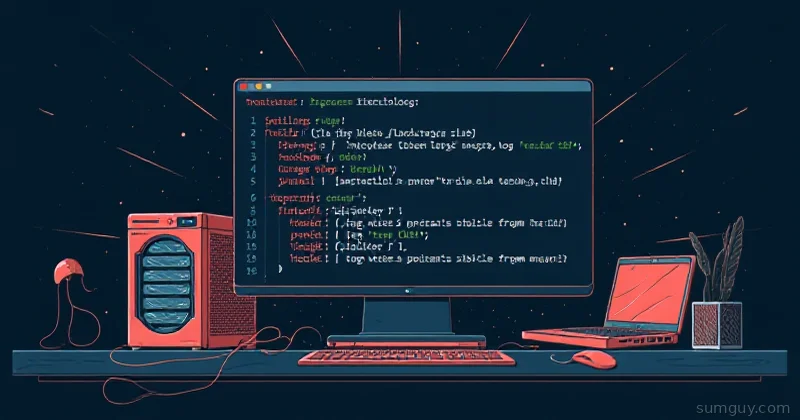

awk Is a Mini-Language

Most people treat awk as a line processor. It is—but it’s also a full programming language with variables, loops, and functions. For logs, you barely need that. Five patterns cover everything.

The basic structure:

awk 'pattern { action }' file.logIf the pattern matches, the action runs. If you omit the pattern, the action runs for every line.

1. Filter Lines by Condition

Extract 404 errors from an Apache log:

awk '$9 == 404' access.log$9 is the 9th field (the HTTP status code). This prints every line where status is 404.

More complex: status code AND a path:

awk '$9 == 500 && $7 ~ /api/' access.log$7 is the path. ~ means “matches regex”. This gets 500 errors from /api/* paths.

Need the opposite? Use !~:

awk '$7 !~ /favicon|static/' access.logExclude favicon and static requests.

2. Count Occurrences

How many 404s are in your log?

awk '$9 == 404' access.log | wc -lBut awk is faster:

awk '$9 == 404 { count++ } END { print count }' access.log{ count++ } runs for each matching line. END runs after all lines. print count outputs the total.

Count by status code:

awk '{ status[$9]++ } END { for (code in status) print code, status[code] }' access.logstatus[$9] is an associative array keyed by HTTP status. After processing all lines, loop through and print counts.

Output:

200 15432404 234500 123. Sum a Field

Your app logs request latency in milliseconds. What’s the total? The average?

awk -F: '{ total += $3; count++ } END { print "Total:", total, "ms | Average:", total/count, "ms" }' latency.log-F: sets the field separator to : (useful if your log format uses colons). $3 is the latency field. Accumulate in total, count lines, then divide for average at the end.

Input log line:

request:user123:145request:user456:89request:user789:201Output:

Total: 435 ms | Average: 145 ms4. Extract and Reformat

You have tab-separated logs. Extract name and email, reformat as CSV:

awk -F'\t' '{print $2 "," $3}' users.logInput (tab-separated):

ID Name Email1 alice alice@example.com2 bob bob@example.comOutput:

Name,Emailalice,alice@example.combob,bob@example.comMore advanced: extract a date range:

awk -F'[: ]' '$4 >= "09:00" && $4 < "17:00"' access.log-F'[: ]' uses multiple delimiters (colon or space). $4 is the hour. This gets logs between 9 AM and 5 PM.

5. Conditional Formatting

Print lines longer than 1000 characters with line numbers:

awk 'length > 1000 { print NR": " $0 }' largefile.loglength is the line length. NR is the line number. $0 is the entire line.

Another common one: print lines matching a pattern with context (2 lines before and after):

awk '/ERROR/ { for (i = 1; i <= 2; i++) if (NR - i in a) print a[NR - i]; print NR": " $0; next } { a[NR] = $0 }' app.logActually, that’s getting gnarly. For context, use grep:

grep -B 2 -A 2 'ERROR' app.logBut within awk? Mark important lines:

awk '/ERROR|FATAL/ { print "*** " $0 " ***" } !/ERROR|FATAL/ { print $0 }' app.logReal Example: Analyzing a Web Server Log

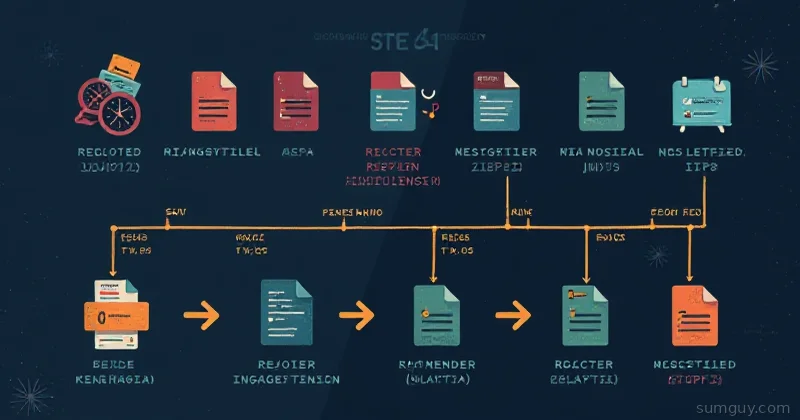

You have an Apache log. You want:

- Count requests per HTTP status

- Find the slowest requests

- Show only non-2xx/3xx responses

# 1. Counts by statusawk '{ status[$9]++ } END { for (s in status) print s ": " status[s] }' access.log | sort -t: -k2 -rn

# 2. Top 10 slowest requestsawk '{ print $10, $7 }' access.log | sort -rn | head -10

# 3. Filter to error codesawk '$9 ~ /^[45]/' access.logLine 1: count by $9 (status), sort by count descending.

Line 2: print response time ($10), then path ($7). Sort by time descending.

Line 3: regex match—if status starts with 4 or 5, print it.

When to Stop Using awk

If your log parsing needs:

- Complex regex with named groups

- Multiple passes over the data

- JSON parsing

Then use jq (JSON) or switch to Python. awk is fast and scriptable, but it has limits.

For everything else—filtering, summing, reformatting—awk is the 20-year-old tool that still outperforms the new hotness.