What Is a Named Pipe?

A regular pipe:

echo "hello" | catData flows one direction, in real-time. Once consumed, it’s gone.

A named pipe (FIFO):

mkfifo /tmp/myfifoecho "hello" > /tmp/myfifo &cat < /tmp/myfifoA file on disk that acts like a pipe. Processes can read and write asynchronously.

FIFOs are useful for:

- Buffering: Decouple producer and consumer speed

- Coordination: Multiple processes synchronize on shared data

- Complex pipelines: Distribute data to multiple consumers

Create and Use a Named Pipe

Create a FIFO:

mkfifo /tmp/myfifoWrite to it:

echo "data" > /tmp/myfifo &The & backgrounds the process (otherwise echo waits for a reader).

Read from it:

cat < /tmp/myfifoThe reader consumes the data, and the writer unblocks.

Clean up:

rm /tmp/myfifoReal Example: Fan-Out (One Input, Multiple Consumers)

You have a data stream. Multiple tools need to process it simultaneously.

Without FIFOs, you’d run the source multiple times (wasteful):

# Slow: runs wget 3 timeswget -q -O - http://api.example.com/data | grep foo > results1.txt &wget -q -O - http://api.example.com/data | grep bar > results2.txt &wget -q -O - http://api.example.com/data | grep baz > results3.txt &waitWith FIFOs, run the source once and fan out:

#!/bin/bash

mkfifo /tmp/data_pipe

# Producer: fetch once in the backgroundwget -q -O - http://api.example.com/data > /tmp/data_pipe &producer_pid=$!

# Consumers: read from the FIFOgrep foo < /tmp/data_pipe > results1.txt &grep bar < /tmp/data_pipe > results2.txt &grep baz < /tmp/data_pipe > results3.txt &

# Wait for producers and consumerswait $producer_pidwait

rm /tmp/data_pipeThe producer writes once. All consumers read from the FIFO. Saves bandwidth and API calls.

Real Example: Fan-In (Multiple Inputs, One Consumer)

Multiple data sources, one processor:

#!/bin/bash

# Create two FIFOsmkfifo /tmp/log1_pipemkfifo /tmp/log2_pipe

# Producers: tail remote logsssh server1 "tail -f /var/log/app.log" > /tmp/log1_pipe &ssh server2 "tail -f /var/log/app.log" > /tmp/log2_pipe &

# Consumer: read from both, process errors(cat /tmp/log1_pipe & cat /tmp/log2_pipe) | grep "ERROR" | tee /tmp/errors.log

# Cleanuprm /tmp/log1_pipe /tmp/log2_pipeOne grep processes logs from two remote servers as if they were one stream.

Buffering with FIFOs

A FIFO has limited buffer (OS-dependent, usually 64KB). When it fills, the writer blocks.

Use that to synchronize speed:

#!/bin/bash

mkfifo /tmp/buffer

# Fast producer: generate data quicklygenerate_data() { for i in {1..100}; do echo "data_$i" sleep 0.01 done}

# Slow consumer: process one per secondprocess_data() { while read line; do echo "Processing: $line" sleep 1 done}

# Fan-out with bufferinggenerate_data > /tmp/buffer &process_data < /tmp/buffer

rm /tmp/bufferThe producer fills the buffer, then blocks. The consumer pulls data at its own pace.

Synchronization: Wait for Multiple Processes

Use FIFOs to block until conditions are met:

#!/bin/bash

# Setupmkfifo /tmp/task1_donemkfifo /tmp/task2_donemkfifo /tmp/task3_done

# Run three slow tasks in parallelslow_task_1 && echo "done" > /tmp/task1_done &slow_task_2 && echo "done" > /tmp/task2_done &slow_task_3 && echo "done" > /tmp/task3_done &

# Wait for all three (reads block until data arrives)read < /tmp/task1_doneread < /tmp/task2_doneread < /tmp/task3_done

echo "All tasks complete"

rm /tmp/task1_done /tmp/task2_done /tmp/task3_doneThis is similar to wait, but more explicit about which processes finish.

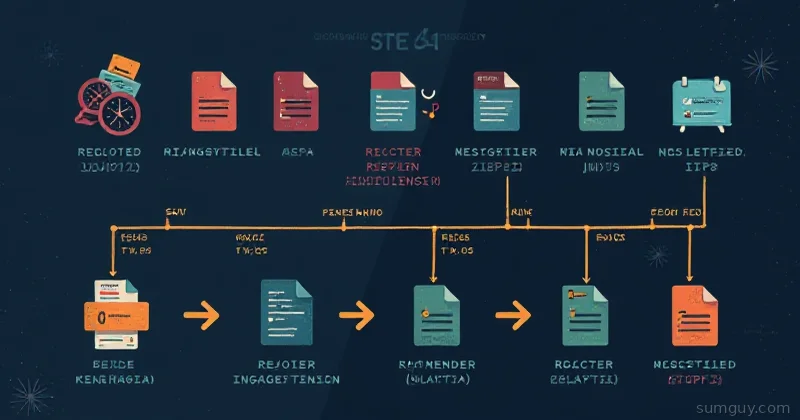

Real Example: Parallel Image Processing with Sequencing

Process images in parallel, but save them in order:

#!/bin/bash

WORKERS=4mkfifo /tmp/img_queue

# Queue all imagesls *.jpg | while read img; do echo "$img"done > /tmp/img_queue &

# Process up to WORKERS in parallelfor (( i=0; i<WORKERS; i++ )); do ( while read img; do convert "$img" -quality 80 "out_$img" echo "$img processed" done < /tmp/img_queue ) &done

waitrm /tmp/img_queue4 workers pull from the queue. As one finishes, it pulls the next image. Keeps all cores busy.

Gotchas and Limitations

FIFOs Block on Open

mkfifo /tmp/testecho "data" > /tmp/test # Blocks here, waiting for a readerThe writer blocks until a reader opens the FIFO. If you forget the reader, the writer hangs forever.

Solution: Always open reader and writer before writing:

mkfifo /tmp/testcat < /tmp/test > output.txt & # Start reader firstecho "data" > /tmp/test # Then writewaitCleanup on Interruption

#!/bin/bash

trap 'rm -f /tmp/fifo; exit' INT TERM

mkfifo /tmp/fifo# ... code ...rm /tmp/fifoUse trap to cleanup if the script is interrupted.

Platform Differences

FIFOs work on Linux, macOS, BSD. Not reliable on Windows (without special tools).

Not for Large Data

FIFOs buffer in memory (limited kernel buffer). For gigabytes of data, use disk-backed queues or message brokers.

When to Use FIFOs

Good for:

- Distributing work among parallel workers

- Coordinating multiple processes

- Streaming data through multiple filters

Not good for:

- Large data sets (>100MB)

- Persistent state (process dies, data is gone)

- Across machines (use pipes, sockets, or message queues)

Alternative: Process Substitution

Remember process substitution from the last article?

diff <(command1) <(command2)That internally uses FIFOs. Under the hood, bash creates FIFOs for you.

Named pipes (explicit) vs process substitution (implicit):

# Process substitution (implicit FIFO)diff <(echo "a") <(echo "b")

# Named pipes (explicit FIFO)mkfifo /tmp/f1 /tmp/f2echo "a" > /tmp/f1 &echo "b" > /tmp/f2 &diff /tmp/f1 /tmp/f2rm /tmp/f1 /tmp/f2Process substitution is cleaner for one-off cases. Named pipes are better when you need explicit control or want to reuse the FIFOs.

Bottom Line

FIFOs solve real problems: buffering, fan-out, synchronization. They’re underused because people don’t think about them. But in bash, they’re a legit tool for coordination.

The key insight: a FIFO is just a pipe with a name. Once you name it, you can have multiple processes read from or write to it at different times. That flexibility unlocks patterns you can’t do with regular pipes.