The Problem: Silent Failures

Write a bash script without strict mode. It does this:

#!/bin/bashcd /nonexistent/pathrm -rf *Script runs. Doesn’t cd because the path doesn’t exist. But it continues anyway. Then it deletes everything in the current directory. Your morning is ruined.

Bash defaults to ignoring errors. That’s insane for scripts. set -euo pipefail fixes it.

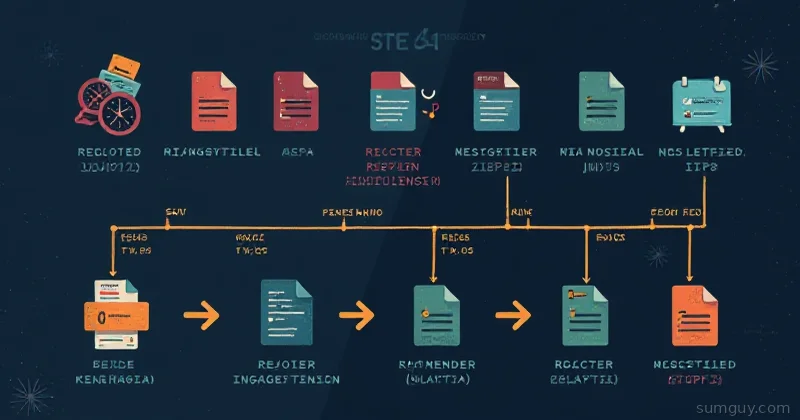

What Each Flag Does

set -e (errexit)

Exit immediately if any command exits with a non-zero status.

#!/bin/bashset -e

cd /nonexistentecho "This never runs"The cd fails. Script exits. echo never runs.

Without set -e, the script continues and echo runs in the wrong directory.

Gotcha: Commands in an if-statement don’t trigger -e:

set -e

if cd /nonexistent; then echo "OK"else echo "Failed"fi

echo "Script continues"This runs to completion because cd is in a conditional. The script wants you to handle it explicitly.

set -u (nounset)

Treat undefined variables as errors.

#!/bin/bashset -u

echo $UNDEFINED_VAROutput: bash: UNDEFINED_VAR: unbound variable and the script exits.

Without set -u, it echoes an empty line. Silent failure. You spend an hour debugging why your script doesn’t work.

Gotcha: Parameters like $@ and $* are special. If you want to safely handle missing parameters:

#!/bin/bashset -u

filename="${1:-default.txt}"The ${1:-default.txt} syntax means: use $1 if set, otherwise use “default.txt”. No error.

set -o pipefail

If any command in a pipe fails, the entire pipe fails.

#!/bin/bashset -o pipefail

cat nonexistent.txt | grep "ERROR" | wc -lWithout pipefail, cat fails but the script exits with the status of wc (success). The error is hidden.

With pipefail, the script exits because cat failed. You see the problem.

Real example:

curl https://api.example.com/data | jq '.results'If curl fails (network error), the script should fail. Without pipefail, jq succeeds on empty input and the script continues. Hours of debugging later, you realize there was no data.

Putting It Together

#!/bin/bashset -euo pipefail

# Declare variables before usereadonly LOG_FILE="${1:-/var/log/app.log}"readonly PROCESSED_DIR="/tmp/processed"

# Early exit if dependencies are missingcommand -v jq >/dev/null || { echo "jq is required"; exit 1; }

mkdir -p "$PROCESSED_DIR"cd "$PROCESSED_DIR" || exit 1

# This pipe will exit if any command failscat "$LOG_FILE" | grep "ERROR" | wc -l-e: Script exits if any command fails-u: Script exits if you reference$UNDEFINED-o pipefail: Script exits if curl or jq fails in a pipe

Common Gotchas and Fixes

Testing with test or [[ ]]

These return non-zero on false. With -e, they cause early exit:

set -e

if [ -f "$file" ]; then echo "File exists"fiThis is fine—[ is in a conditional.

But this breaks:

set -e

[ -f "$file" ] && echo "File exists"Why? && isn’t a conditional—it’s a command conjunction. If [ -f "$file" ] is false, the script exits.

Fix:

[ -f "$file" ] || echo "File missing"Or put it in an if:

if [ -f "$file" ]; then echo "File exists"fiReturning from Functions

Functions respect -e. If a command in a function fails, the function exits and the script exits:

set -e

process_file() { cat "$1" | grep "ERROR" echo "Processed" # Runs only if grep succeeds}

process_file /var/log/app.logIf the file doesn’t exist or has no errors, grep fails, the function exits, and the script exits. The echo never runs.

Disabling -e Temporarily

Sometimes you want to ignore an error. Use set +e:

set -e

set +erm /tmp/maybe_doesnt_exist.txtset -e

echo "Continued"Or run a command with || true:

set -e

rm /tmp/maybe_doesnt_exist.txt || trueecho "Continued"|| true means: if rm fails, run true (which always succeeds). The script continues.

The IFS Gotcha With -u

One subtle issue with -u: the $@ and $* special variables expand to nothing when no arguments are passed. With -u, you’ll get “unbound variable” errors if you reference them carelessly.

Safe pattern for optional args:

#!/bin/bashset -euo pipefail

# This will fail with -u if no args passed:# first_arg="$1"

# Safe: use default value syntaxfirst_arg="${1:-}" # Empty string if not providednamed_arg="${2:-default}" # "default" if not provided

if [ -n "$first_arg" ]; then echo "Got arg: $first_arg"fiThe ${VAR:-default} syntax is your friend under set -u. It provides a default value instead of triggering the unbound variable error.

Add an ERR Trap

Pair strict mode with a trap to print where the script died:

#!/bin/bashset -euo pipefail

trap 'echo "Error on line $LINENO"' ERR

echo "Step 1"false # This triggers the trapecho "Step 2" # Never runsOutput:

Step 1Error on line 5For debugging, print more context:

trap 'echo "Error on line $LINENO: $BASH_COMMAND"' ERRNow you see the exact command that failed. Beats staring at a silent non-zero exit code.

Bottom Line

Every bash script over 20 lines should start with:

#!/bin/bashset -euo pipefailIt makes scripts fail fast instead of corrupting data at 2 AM. Your ops team will thank you.