The Load Balancer You Keep Ignoring

You’ve probably heard of HAProxy. Maybe you’ve seen it mentioned in a stack overflow thread, or noticed it in the config of some open source project. Then you clicked through to the documentation, saw phrases like “nbthread”, “tune.ssl.default-dh-param”, and “maxconnrate” and quietly closed the tab.

Here’s the thing: HAProxy has a reputation for complexity that it doesn’t entirely deserve. Yes, it has 500+ config options. No, you don’t need to understand them. The 20 options you’ll actually use are straightforward, and what you get in return is a load balancer that runs GitHub, Reddit, Instagram, and basically half the internet at scale.

Let’s demystify it.

What HAProxy Actually Is

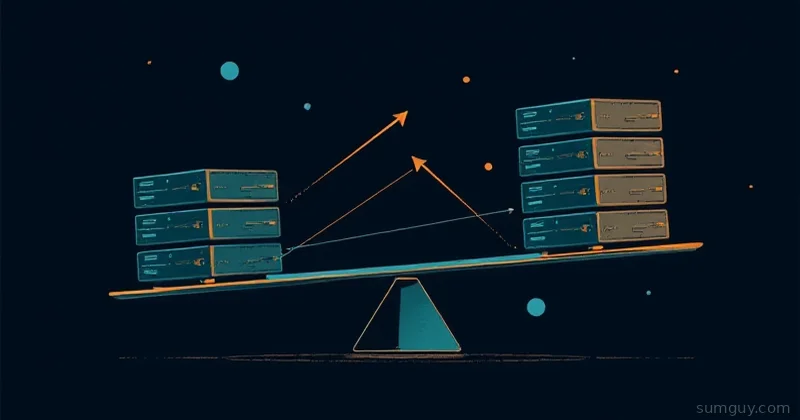

HAProxy (High Availability Proxy) is a battle-tested TCP and HTTP load balancer and proxy server that’s been around since 2000. It operates at both Layer 4 (TCP) and Layer 7 (HTTP), which means it can route raw TCP connections and make smart routing decisions based on HTTP headers, URLs, and cookies.

Why reach for HAProxy instead of nginx or Traefik?

- TCP load balancing: Traefik is HTTP-first. HAProxy doesn’t care — it’ll proxy Postgres, Redis, MQTT, or whatever you’re running.

- Granular health checks: Rise/fall thresholds, custom HTTP check paths, check intervals in milliseconds.

- High connection counts: HAProxy is event-driven and single-process. It handles tens of thousands of concurrent connections without breaking a sweat.

- Stats dashboard: Built-in real-time monitoring, no sidecar required.

Nginx can load balance. Traefik can load balance. But when you need serious control over how traffic flows — HAProxy is the tool that was built specifically for this job.

Config Structure: Four Sections, That’s It

HAProxy configs have four sections. Once you know what each one does, the rest is just filling in blanks.

global # Process-level settings: logging, limits, SSL

defaults # Defaults for all frontends and backends that follow

frontend my_frontend # Where traffic comes IN: ports, ACLs, routing decisions

backend my_backend # Where traffic goes OUT: server list, balancing algo, health checksThat’s the whole mental model. Traffic enters through a frontend, gets routed by ACLs, and lands on a backend where your actual servers live.

A Real Config, Explained

Here’s a complete HAProxy config for 3 app containers with health checks, a stats page, and HTTP routing:

global log stdout format raw local0 maxconn 50000

defaults log global mode http option httplog option dontlognull timeout connect 5s timeout client 30s timeout server 30s

#---------------------------------------------------------------------# Stats page — keep this locked down or firewall it#---------------------------------------------------------------------frontend stats bind *:8404 stats enable stats uri /stats stats refresh 10s stats auth admin:supersecretpassword stats admin if TRUE

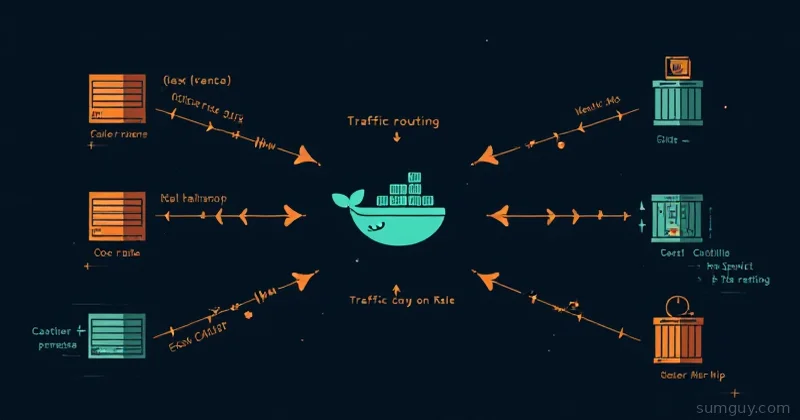

#---------------------------------------------------------------------# Main HTTP frontend#---------------------------------------------------------------------frontend http_in bind *:80

# ACL: route /api/* to the API backend acl is_api path_beg /api/

use_backend api_servers if is_api default_backend app_servers

#---------------------------------------------------------------------# App backend: round-robin across 3 containers#---------------------------------------------------------------------backend app_servers balance roundrobin option httpchk GET /health http-check expect status 200

server app1 app1:3000 check inter 5s rise 2 fall 3 server app2 app2:3000 check inter 5s rise 2 fall 3 server app3 app3:3000 check inter 5s rise 2 fall 3

#---------------------------------------------------------------------# API backend: least connections#---------------------------------------------------------------------backend api_servers balance leastconn option httpchk GET /api/health http-check expect status 200

server api1 api1:4000 check inter 5s rise 2 fall 3 server api2 api2:4000 check inter 5s rise 2 fall 3Let’s break down the interesting bits.

Frontends: Where Traffic Enters

The frontend is your entry point. bind *:80 listens on all interfaces on port 80. You can bind multiple ports, add SSL here, or split traffic by port.

ACLs are the routing brain. They test conditions and let you make use_backend decisions:

# Match by path prefixacl is_api path_beg /api/

# Match by hostnameacl is_blog hdr(host) -i blog.example.com

# Match by request methodacl is_post method POST

# Combine conditionsuse_backend heavy_backend if is_api is_postACLs are evaluated top to bottom. The first matching use_backend wins. If nothing matches, default_backend catches it.

Backends: Where Traffic Goes

The backend defines your server pool and how HAProxy distributes load.

Balancing algorithms:

roundrobin— Requests cycle through servers in order. Simple, predictable.leastconn— Next request goes to the server with the fewest active connections. Great for long-lived connections or anything with variable processing time.source— Hashes the client IP so the same client always hits the same server. Poor man’s sticky sessions.random— Randomly selects 2 servers, sends to the one with fewer connections. Surprisingly effective at scale.

For most web apps, roundrobin is fine. Use leastconn when requests have wildly different durations (file uploads, video transcoding, etc.).

Health Checks: The Part That Actually Matters

This is where HAProxy earns its keep. Health checks are what let you pull a container out of rotation without downtime.

server app1 app1:3000 check inter 5s rise 2 fall 3check— Enable health checking for this serverinter 5s— Check every 5 secondsrise 2— Server needs 2 consecutive successes to go from DOWN to UPfall 3— Server needs 3 consecutive failures to go from UP to DOWN

The rise/fall thresholds prevent flapping. A server that’s struggling but not fully dead won’t get pulled at the first hiccup, and won’t get added back until it’s actually stable.

For HTTP checks:

option httpchk GET /healthhttp-check expect status 200HAProxy sends a real HTTP request and checks the response code. Your /health endpoint can do whatever you want — check DB connectivity, cache availability, whatever signals “I’m ready.”

For TCP-only services (Redis, Postgres, etc.):

backend postgres_backend mode tcp balance leastconn option tcp-check server db1 db1:5432 check inter 10s rise 2 fall 3Sticky Sessions

Round-robin means each request can land on a different server. That’s usually fine, but some apps store session state in memory. If user A’s session is on app2 and their next request hits app3, things break.

The fix is a cookie-based sticky session:

backend app_servers balance roundrobin cookie SERVERID insert indirect nocache

server app1 app1:3000 check cookie app1 server app2 app2:3000 check cookie app2 server app3 app3:3000 check cookie app3HAProxy injects a SERVERID cookie on the first response. Subsequent requests from that client carry the cookie, and HAProxy routes them to the same backend. When the backend is down, HAProxy ignores the cookie and picks another server.

Honest advice: if you’re building something new, store sessions in Redis and skip sticky sessions entirely. But if you’re load balancing a legacy app you can’t modify, this saves you.

SSL Termination

HAProxy can terminate SSL so your backends receive plain HTTP:

frontend https_in bind *:443 ssl crt /etc/haproxy/certs/example.com.pem default_backend app_serversThe .pem file should be your certificate + private key concatenated. HAProxy handles the TLS handshake; backends see unencrypted HTTP.

For SSL passthrough (HAProxy doesn’t decrypt, just routes):

frontend https_passthrough bind *:443 mode tcp default_backend ssl_backends

backend ssl_backends mode tcp server app1 app1:443 checkUse passthrough when backends handle their own TLS (mutual auth, client certs, etc.) or when you have compliance requirements about where decryption happens.

Docker Compose Setup

Here’s the full Docker Compose for the 3-app setup:

services: haproxy: image: haproxy:3.0-alpine ports: - "80:80" - "8404:8404" volumes: - ./haproxy.cfg:/usr/local/etc/haproxy/haproxy.cfg:ro depends_on: - app1 - app2 - app3

app1: image: your-app:latest environment: - NODE_ENV=production

app2: image: your-app:latest environment: - NODE_ENV=production

app3: image: your-app:latest environment: - NODE_ENV=productionBring it up:

docker compose up -dThen hit http://localhost:8404/stats with the credentials you set. You’ll see a live dashboard showing request rates, response times, server status, and health check results for every backend. It’s genuinely useful — not just for debugging but for understanding your traffic patterns.

Reload Without Downtime

When you update haproxy.cfg, you don’t restart HAProxy — you reload it. Existing connections finish normally while new connections use the updated config:

# Validate config firstdocker compose exec haproxy haproxy -c -f /usr/local/etc/haproxy/haproxy.cfg

# Reload without downtimedocker compose kill -s HUP haproxyThe -c flag is a dry run. Always run it before reloading. HAProxy will tell you exactly what’s wrong if the config has errors, which is more than can be said for some other tools.

The 20 Options You Actually Need

Here’s what you just learned to use:

bind,mode,timeout(global plumbing)balance roundrobin|leastconn|source(traffic distribution)server <name> <host>:<port> check(backend definition)option httpchk,http-check expect status(health checks)inter,rise,fall(check thresholds)acl,use_backend,default_backend(routing)cookie ... insert(sticky sessions)ssl crt(SSL termination)stats enable,stats uri,stats auth(dashboard)

That’s it. That’s the HAProxy you’ll use 95% of the time. The other 480 options exist for edge cases you’ll know you have when you have them.

HAProxy’s reputation for complexity is mostly documentation shock — the manual is exhaustive because it covers everything. But a working config for a real service fits in 50 lines. Now you have one.