You Pulled a Model. Now What?

Congratulations. You ran ollama pull llama3 and had a conversation with a locally-running LLM. You felt like a wizard. That’s valid.

But here’s the thing — that was the tutorial. The training wheels version. Ollama’s real muscle is in what happens after the pull: shaping model behavior, squeezing performance out of whatever GPU you have (or don’t have), and integrating it with actual tools.

This post covers the stuff the quickstart guide glosses over. Modelfiles, quantization trade-offs, GPU offloading, context tuning, the REST API, and how to stop your model from cold-starting every single request. Practical examples throughout. No fluff. Well, minimal fluff.

Modelfiles: Baking Your Personality In

A Modelfile is Ollama’s version of a Dockerfile — a plain text recipe that defines how a model behaves. You can layer a custom system prompt on top of any base model, tune parameters, and save the whole thing as a named model you can call anytime.

Here’s the basic structure:

FROM llama3

PARAMETER temperature 0.7PARAMETER top_p 0.9PARAMETER num_ctx 4096

SYSTEM """You are a grumpy but accurate Linux sysadmin named Dave.You answer questions correctly, but you always complain about how the usershould have Googled it first. Keep responses under 200 words."""Save that as Modelfile, then run:

ollama create grumpy-dave -f Modelfileollama run grumpy-daveYou now have a persistent, named model with Dave’s whole personality baked in. No need to paste a system prompt every time you open a session.

The Four Directives You Actually Need

FROM — the base model. Can be a model name (llama3, mistral) or a path to a GGUF file if you’re loading something you downloaded manually.

PARAMETER — tuning knobs. The important ones:

temperature— creativity dial. 0.1 is a boring accountant, 1.5 is a caffeine-fueled conspiracy theorist. 0.7 is the sweet spot for most tasks.top_p— controls vocabulary diversity. Leave it at 0.9 unless you have a reason to touch it.num_ctx— context window size in tokens. More on this shortly.num_gpu— how many GPU layers to load. This is the performance lever.

SYSTEM — your system prompt, verbatim. This is how you give the model a job title, constraints, a persona, or a set of rules. Use triple quotes for multiline.

TEMPLATE — the prompt format wrapper. You usually don’t need to set this — Ollama handles it per-model. But if you’re loading a raw GGUF from Hugging Face, you may need to specify the chat template manually so the model knows how to parse turns.

Quantization: The Trade-Off Nobody Explains Clearly

When you pull a model, you’re often pulling a quantized version. Quantization shrinks model weights from 32-bit floats down to lower precision, which means smaller file sizes and faster inference — at the cost of some accuracy.

The common formats you’ll see:

| Format | Size (7B) | Quality | RAM Needed |

|---|---|---|---|

| Q4_K_M | ~4.1 GB | Good | ~6 GB |

| Q5_K_M | ~5.0 GB | Better | ~8 GB |

| Q8_0 | ~7.7 GB | Near-lossless | ~10 GB |

| F16 | ~14 GB | Full precision | ~18 GB |

Q4_K_M is the practical default for most people. It’s small, fast, and the quality degradation is hard to notice in casual use. Think of it as “good enough and it actually runs.”

Q8_0 is for when quality matters and you have the VRAM to spare. Coding tasks, reasoning, anything where precision counts — Q8 is worth the extra headroom.

F16 is for researchers and people with A100s. If you’re reading this blog, you probably don’t need it.

To pull a specific quantization:

ollama pull llama3:8b-instruct-q8_0Check what’s available for a model at ollama.com/library.

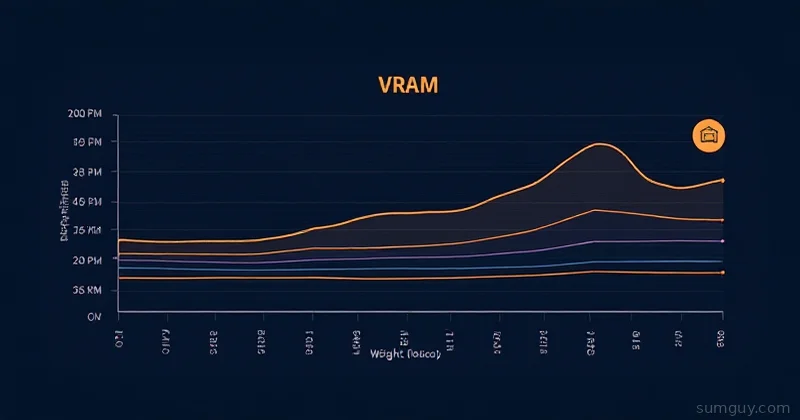

GPU Layer Offloading: The Real Performance Lever

Here’s where people go wrong: they install Ollama, they have a GPU, and they wonder why inference is still painfully slow. Nine times out of ten, they haven’t checked how many layers are actually hitting the GPU.

Ollama offloads model layers to GPU memory automatically, but it can only fit what fits. If your model is 8GB and your VRAM is 6GB, some layers stay on CPU and everything slows to a crawl.

You can control this explicitly with num_gpu in your Modelfile or via the API:

PARAMETER num_gpu 35This tells Ollama to load 35 layers onto the GPU. A 7B model typically has 32-33 transformer layers plus a few more. Setting num_gpu 99 is the “just put everything on the GPU” shorthand — Ollama will cap it at whatever fits.

To see what’s actually happening:

ollama psThis shows running models, how much VRAM they’re using, and what processor they’re running on. If you see 100% CPU next to your model, that’s your problem right there.

The bitter truth: running a 70B model on 8GB VRAM is not a configuration problem you can fix. It’s a hardware problem. Q4_K_M of a 70B model is roughly 40GB. You need either a beefy GPU, multiple GPUs, or the patience of a monk.

For 8GB VRAM, stay in the 7B-13B range with Q4_K_M or Q5_K_M quantization. That’s where you’ll get snappy inference.

Context Length: Don’t Just Max It Out

num_ctx controls how many tokens the model can “see” at once — your conversation history plus the current prompt. More context means the model remembers more, but it also means more VRAM usage and slower inference.

The default for most models is 2048. Setting it to 32768 because you saw that in a tutorial is how you accidentally push layers off the GPU and into slow CPU territory.

A practical guide:

- Chat and Q&A: 2048–4096 is fine

- Summarizing documents: 8192–16384

- Long-form analysis: 32768+ (if your hardware allows)

Set it in your Modelfile:

PARAMETER num_ctx 8192Or override it per-request in the API if you need different context for different tasks.

The REST API: Ollama Isn’t Just a CLI

Ollama exposes a REST API at http://localhost:11434 by default. This is how you integrate it with applications, scripts, and other tools.

Two endpoints matter most:

/api/generate — Single-turn completion

curl http://localhost:11434/api/generate \ -d '{ "model": "llama3", "prompt": "Explain VRAM in one sentence.", "stream": false }'Good for one-shot tasks, scripts, and anything where you don’t need conversation history.

/api/chat — Multi-turn conversation

curl http://localhost:11434/api/chat \ -d '{ "model": "llama3", "messages": [ {"role": "user", "content": "What is a Dockerfile?"}, {"role": "assistant", "content": "A Dockerfile is a recipe for building a container image..."}, {"role": "user", "content": "How is that different from a docker-compose.yml?"} ], "stream": false }'You maintain the conversation history yourself and send it with each request. More verbose, but gives you full control.

Both endpoints support stream: true (the default) which sends tokens as they’re generated — useful for building UIs. Set stream: false for scripting when you just want the complete response.

Concurrent Requests and Keeping Models Warm

By default, Ollama unloads a model from memory after 5 minutes of inactivity. The next request has to reload it, which takes several seconds. If you’re building something that people actually use, this is annoying.

Keep a model loaded indefinitely:

curl http://localhost:11434/api/generate \ -d '{"model": "llama3", "keep_alive": -1}'keep_alive: -1 means “never unload.” Use keep_alive: 0 to force-unload immediately.

Concurrent requests: Ollama 0.1.33+ supports running multiple models simultaneously and handling concurrent requests. By default it queues requests to a single model. For higher throughput, set:

OLLAMA_NUM_PARALLEL=4 ollama serveThis lets Ollama handle 4 simultaneous requests to the same model. Useful if you’re running a shared instance. Tune it based on your VRAM — more parallel requests means more memory per request.

Plugging Into the Ecosystem

Ollama plays nicely with a growing list of tools. A few worth knowing:

Open WebUI — a full ChatGPT-style interface that connects to Ollama out of the box. Self-hosted, Docker-deployable, supports multiple models, conversation history, file uploads. If you want a browser UI for your local models, start here.

docker run -d -p 3000:8080 \ --add-host=host.docker.internal:host-gateway \ -e OLLAMA_BASE_URL=http://host.docker.internal:11434 \ ghcr.io/open-webui/open-webui:mainLiteLLM — a proxy that makes Ollama look like OpenAI’s API. If you have code that talks to OpenAI and you want to swap in local models without rewriting anything, LiteLLM is the bridge. Point your app at LiteLLM’s endpoint, configure it to use Ollama under the hood, done.

Anything that supports OpenAI-compatible APIs — Ollama itself has a /v1/ compatibility layer. Hit http://localhost:11434/v1/chat/completions and most OpenAI SDK code just works.

Troubleshooting Slow Inference

Before you blame the model, blame the setup.

Check GPU usage first:

ollama ps# Look for VRAM usage and processor typewatch -n1 nvidia-smi # Or rocm-smi for AMDIf you see the model running on CPU, you have a layer-fit problem. Reduce num_ctx or switch to a smaller quantization.

Model loading every request? You need keep_alive: -1 or your timeout is too short.

First token takes forever? That’s load time. Once the model is warm, subsequent tokens should come faster. Use ollama ps to confirm it’s resident in memory.

Slow on a good GPU? Check num_gpu in your Modelfile. If it’s not set and your model is large, you may be splitting layers across CPU and GPU — the worst of both worlds.

Linux and NVIDIA: Make sure you have the NVIDIA container toolkit installed if you’re running Ollama in Docker. Without it, the container can’t see the GPU at all, and you’re doing CPU inference while wondering why your RTX 3090 is idle.

Pull It All Together

Ollama goes from “neat demo” to “actual tool” once you start treating it like infrastructure. Modelfiles let you version and share model configurations. Quantization choices let you fit the right model into the right hardware. GPU tuning removes the biggest performance bottleneck. The API opens it up to every other tool in your stack.

The mental shift is treating local LLMs the same way you’d treat any other self-hosted service — with proper configuration, appropriate resource allocation, and some thought about how it fits into the rest of what you’re running.

Your 8GB GPU isn’t going to run Llama 70B at useful speeds. That’s fine. A well-tuned Llama 3.1 8B Q5_K_M with a solid system prompt will handle 90% of practical tasks at genuinely usable speeds. Optimize for what you have, not what you wish you had.

Dave the grumpy sysadmin would tell you you should have figured all this out yourself. But at least now you have somewhere to start.