The Seductive Lie

“Let’s set scrape interval to 5 seconds. More data is better, right?”

Everyone thinks this. Everyone’s wrong.

Prometheus is a tradeoff machine. You’re not getting “more data.” You’re paying in storage, CPU, and stability to get slightly more granular time series.

Default: 15 Seconds

Prometheus’s default scrape interval is 15 seconds. Most people think this is too slow. It’s not.

Here’s why: rate() and increase() work best with 4-5 data points per window.

If you’re calculating rate(requests[1m]), that’s 60 seconds of data. At 15-second intervals, you have 4 samples. Perfect. At 5-second intervals, you have 12 samples — but the math isn’t better, and you’ve tripled storage.

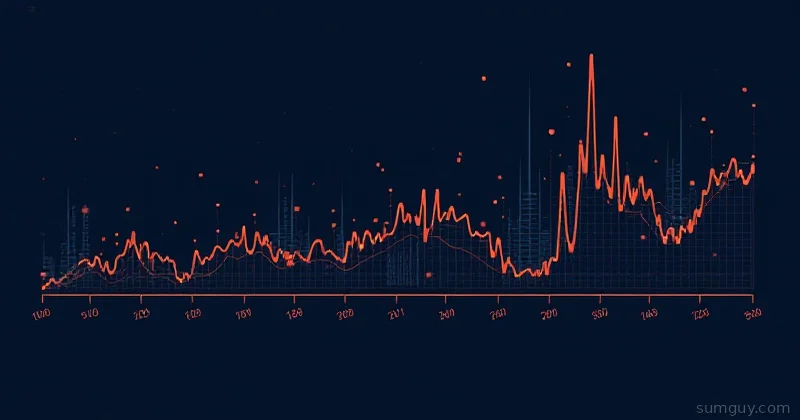

The Math

Let’s say you’re scraping 100 metrics per target, 50 targets, every 15 seconds.

Samples per minute = (100 metrics × 50 targets) × (60 / 15 seconds) = 5000 samples × 4 = 20,000 samples/minuteScale to a month:

Storage ≈ 20,000 samples/min × 60 min × 24 hours × 30 days = ~864 million samples/month per shardWith a 2GB block (Prometheus default), that’s roughly 2.3 blocks/month. Manageable.

Now change to 5-second intervals:

Samples per minute = 5000 samples × 12 = 60,000 samples/minuteSame math: ~2.6 billion samples/month. You’ve just tripled storage and compaction time.

What Changes When You Scrape Faster?

Storage

Roughly linear. 5-second intervals = 3x the disk space. Queries get slower.

CPU

Scraping is cheap. Compaction is expensive. Faster scraping = more frequent block compaction. Your Prometheus server spends cycles compacting instead of answering queries.

Query Accuracy

Not better. You’re not getting finer-grained truth; you’re getting noise. rate() over 5 seconds with 5-second samples is just one data point — meaningless.

Alerting Responsiveness

Faster scraping means faster alert detection. But only by seconds. If you can’t respond to an alert in 15 seconds anyway, what’s the point?

When Fast Scraping Makes Sense

High-Volatility Metrics

CPU spike detection. You want to catch microsecond-scale spikes:

global: scrape_interval: 5s

scrape_configs: - job_name: 'cpu-spikes' scrape_interval: 5s static_configs: - targets: ['localhost:9100']But only for this job. Everything else? 15 seconds.

Time-Series with High Cardinality

If you’re tracking “requests per millisecond,” you need finer granularity. But if you’re tracking “requests per minute,” you don’t.

SLO Tracking

Errors are discrete events. Error rates change in chunks. You don’t need 5-second samples to notice your error rate doubled. 15 seconds is fine.

What You Should Actually Do

global: scrape_interval: 15s scrape_timeout: 10s evaluation_interval: 15s

scrape_configs: # Default: 15 seconds for everything - job_name: 'prometheus' static_configs: - targets: ['localhost:9090']

# Faster scrape for time-critical metrics - job_name: 'gpu-metrics' scrape_interval: 5s static_configs: - targets: ['localhost:9400']

# Slower scrape for stable, low-cardinality metrics - job_name: 'batch-jobs' scrape_interval: 60s static_configs: - targets: ['localhost:9200']Let different jobs have different intervals. Batch jobs? 60 seconds. Core services? 15 seconds. GPU monitoring? 5 seconds.

Retention vs. Interval

Storage decisions compound:

global: scrape_interval: 5sWith 15GB max storage and 5-second scrapes, you get ~3 days of retention.

With 15-second scrapes, you get ~9 days. That’s enough to catch day-long trends.

retention_days = (max_disk_GB × 1 billion bytes/GB) / (samples_per_day)Choose your retention window first. Then pick an interval that fits.

Scrape Timeout vs. Scrape Interval

These two settings trip people up:

global: scrape_interval: 15s # How often to scrape scrape_timeout: 10s # How long before giving upscrape_timeout must be less than scrape_interval. If your target takes 12 seconds to respond and your timeout is 10 seconds, every scrape fails silently.

If a target is slow, you have options:

- Increase

scrape_timeout(but keep belowscrape_interval) - Reduce what the target exposes

- Profile why the exporter is slow

Never set scrape_timeout >= scrape_interval. Prometheus will reject the config.

Monitor Prometheus Itself

Prometheus exposes its own metrics at /metrics. Keep an eye on scrape performance:

# Time spent scraping targets (p99)histogram_quantile(0.99, scrape_duration_seconds_bucket)

# Targets that are currently downup == 0

# How many samples are ingested per secondrate(prometheus_tsdb_head_samples_appended_total[5m])If scrape_duration_seconds is regularly approaching your scrape_interval, you need to either increase the interval, reduce metrics cardinality, or add more Prometheus capacity.

The Real Talk

Prometheus is not a time-series database for microsecond-level detail. It’s a metrics database for operational monitoring. You don’t need 5-second granularity to know your system is on fire.

Start at 15 seconds. Only go faster if:

- You’ve measured storage impact

- You’ve confirmed Prometheus CPU load stays under 50%

- You actually need the finer granularity

Otherwise you’re just paying 3x the cost for 1% better alerting.

Your disk (and your 2 AM sleep) will thank you.