Every File You’ve Ever Cared About Lives On Someone Else’s Server

The internet is fragile. Not at the infrastructure level — the cables and routers are fine — but at the naming level. HTTP says “go get the file at this specific server at this specific path.” When the server goes away, the file goes away. The URL was never a description of the file, it was a description of where the file used to be.

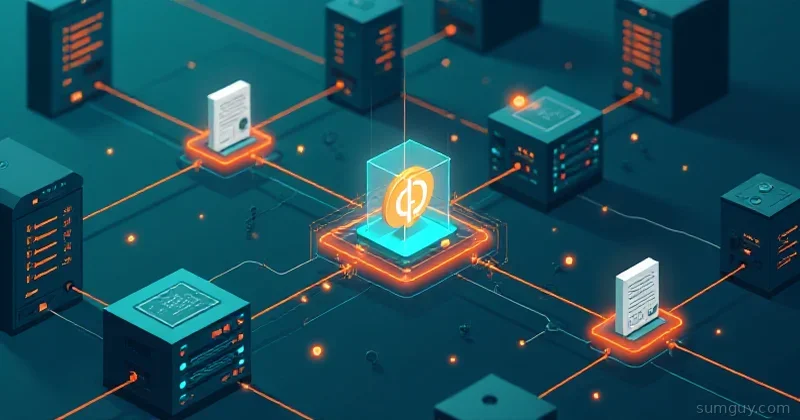

IPFS (InterPlanetary File System) flips this on its head. Instead of asking “where is this file?”, IPFS asks “what is this file?” Every file gets an address based on its cryptographic hash. The same file, anywhere in the network, has the same address. You can get it from whoever has it — doesn’t matter who.

It’s a genuinely different mental model, and it has real applications beyond the blockchain hype that tends to follow it around.

Content Addressing vs Location Addressing

The core concept, explained without the buzzwords:

HTTP (location addressing):

- URL =

https://example.com/files/report.pdf - Means: “Ask the server at example.com for the file at /files/report.pdf”

- If the server is down, you get nothing

- The same file at a different URL is a different resource as far as HTTP is concerned

- Servers can silently change the content at that URL

IPFS (content addressing):

- CID (Content Identifier) =

QmXoypizjW3WknFiJnKLwHCnL72vedxjQkDDP1mXWo6uco - Means: “Give me the file whose SHA-256 hash equals this value”

- Any node that has it can serve it

- The same file always has the same CID

- If the content changes, the CID changes — you can’t silently serve different content

The CID is generated by hashing the file content, so it’s both an address and an integrity check. You cannot get content that doesn’t match the CID — the network verifies it.

How the DHT Works (Brief Version)

IPFS uses a Distributed Hash Table (DHT) to find who has a given CID. When you add a file:

- Your node calculates the CID

- Announces to the network: “I have content at CID X”

- Other nodes record this in the DHT

When you request a CID:

- Your node queries the DHT: “who has CID X?”

- DHT returns a list of peers that announced it

- You connect to those peers and download the content

- Your node verifies the hash matches the CID

- Optionally, you now serve it too (you “seed” what you fetch)

This is similar to how BitTorrent works — you’re downloading from multiple peers, each piece is verified, and downloaders become uploaders.

Installing IPFS

IPFS has an official CLI tool called Kubo (previously go-ipfs):

# Download the latest releasewget https://dist.ipfs.tech/kubo/v0.27.0/kubo_v0.27.0_linux-amd64.tar.gztar -xzf kubo_v0.27.0_linux-amd64.tar.gzcd kubosudo bash install.sh

# Verifyipfs version

# Initialize your nodeipfs init# Creates ~/.ipfs with your node's identity key and config

# Start the daemonipfs daemonYou’ll see output showing your node’s Peer ID (a hash of your public key) and the addresses it’s listening on.

For production or always-on usage, create a systemd service:

[Unit]Description=IPFS DaemonAfter=network.target

[Service]User=ipfsExecStart=/usr/local/bin/ipfs daemonRestart=on-failureEnvironment="IPFS_PATH=/home/ipfs/.ipfs"

[Install]WantedBy=multi-user.targetsudo useradd -m -s /bin/bash ipfssudo -u ipfs ipfs initsudo systemctl enable --now ipfsAdding and Retrieving Files

# Add a file to IPFSipfs add myfile.txt# Output: added QmXoypiz... myfile.txt

# Add a directoryipfs add -r /my/directory/

# Add without announcing to the network (just get the CID)ipfs add --only-hash myfile.txt

# Retrieve a file by CIDipfs get QmXoypiz...ipfs cat QmXoypiz...

# Check what you have locallyipfs pin lsipfs repo statPinning: Why Your Files Will Disappear Without It

Here’s the catch nobody tells you upfront: IPFS has a garbage collector. Content you’ve added can be evicted if your local storage fills up, unless you’ve pinned it.

Pinning tells IPFS “keep this content forever, don’t garbage collect it.”

# Pin a CIDipfs pin add QmXoypiz...

# List pinned contentipfs pin ls --type recursive

# Unpin (allows GC to collect it)ipfs pin rm QmXoypiz...

# Run garbage collection manuallyipfs repo gc

# Check repo sizeipfs repo statContent you retrieve (but didn’t add) is cached temporarily but not pinned — it can be garbage collected. If you want to keep content you fetched from someone else, pin it.

IPFS Desktop

If you prefer a GUI, IPFS Desktop wraps the Kubo daemon with a visual interface showing connected peers, your files, and network stats. It’s decent for exploration and demo purposes, less useful for server deployments.

Download from: https://github.com/ipfs/ipfs-desktop/releases

Public Gateways: IPFS for People Who Don’t Run IPFS

Public IPFS gateways let you access IPFS content via a regular HTTP URL — no IPFS client needed:

https://ipfs.io/ipfs/QmXoypiz...https://cloudflare-ipfs.com/ipfs/QmXoypiz...https://dweb.link/ipfs/QmXoypiz...This is how you share IPFS content with people who don’t have the daemon running. Just give them the gateway URL.

You can also run your own gateway:

# Your local gateway is already running at:http://localhost:8080/ipfs/QmXoypiz...Pinata and Web3.Storage: Pinning Services

Running your own IPFS node is great, but your home lab isn’t always on. Pinning services keep your content alive on their infrastructure:

- Pinata (pinata.cloud): Simple paid pinning, good API, generous free tier

- Web3.Storage: Free storage backed by Filecoin, focuses on persistence

- nft.storage: Specifically for NFT metadata (yes that’s a thing)

# Upload to Pinata via APIimport requests

url = "https://api.pinata.cloud/pinning/pinFileToIPFS"headers = {"Authorization": "Bearer YOUR_JWT_TOKEN"}

with open("myfile.txt", "rb") as f: response = requests.post(url, files={"file": f}, headers=headers)

print(response.json()["IpfsHash"]) # Your CIDUsing a pinning service + your local node is a solid combo: you serve content locally when your node is up, and the pinning service keeps it alive when it’s not.

Real Use Cases That Aren’t NFTs

Static websites: Deploy a static site to IPFS once, get a permanent CID. Update by deploying a new version with a new CID. Use IPNS (InterPlanetary Name System) or DNSLink to point a mutable name at the latest CID.

# Add a static site directoryipfs add -r --cid-version=1 ./public/# Note the root CID

# Publish to IPNS (your node's mutable name)ipfs name publish QmNewCIDHere

# Resolve IPNS to find current CIDipfs name resolve /ipns/your-peer-idSoftware distribution: Distribute binaries by CID. Users can verify they got exactly what you published — the hash is the guarantee.

Archive and backup: Content-addressed storage means deduplication is free. Two machines with the same file contribute the same CID, no duplicate storage.

Resilient documentation: Put your docs on IPFS. Even if your site goes down, people who pinned it can still serve it.

Internal file sharing: Within a home lab, IPFS can replace some ad-hoc file sharing patterns — add a file, give someone the CID, they retrieve it. No HTTP server needed.

Limitations: The Honest Part

No deletion: You cannot delete content from IPFS. You can unpin your local copy and stop serving it, but if others have pinned it, it stays in the network. Privacy-sensitive data should never go on IPFS.

Performance: Retrieval speed depends on how many peers have the content and how well connected they are. Popular content is fast. Obscure content with one peer on a slow connection is very slow.

Content moderation: The decentralized nature means there’s no central authority to remove illegal content. Gateways can refuse to serve certain CIDs, but the content itself persists as long as someone pins it.

Not a replacement for databases: IPFS stores files, not structured data. You can store JSON, but you can’t query it. It’s a file system, not a database.

NAT traversal: Home lab nodes behind NAT may have trouble being found by peers. IPFS has NAT traversal tools, but it’s not always reliable. A VPS or port-forwarded setup helps.

Quick Reference

# Add file, get CIDipfs add file.txt

# Retrieve by CIDipfs get QmABCD...

# Pin to keep itipfs pin add QmABCD...

# Start daemonipfs daemon

# Check node infoipfs idipfs swarm peers | wc -l # How many peers you're connected to

# Gateway URL for sharingecho "https://ipfs.io/ipfs/$(ipfs add -q file.txt)"IPFS doesn’t solve every storage problem. But for archiving, content integrity verification, and building things that survive individual server outages, it’s a genuinely interesting tool. Add it to your home lab, play with it, and you’ll find a use for it.

Your 2 AM self will appreciate the link that still works three years from now.