The Mystery: Idle SSH Sessions Disconnect

You SSH into a server, leave the session open while working on something else, come back 30 minutes later and get Connection reset by peer. No error on your end. The server didn’t crash. What happened?

TCP keepalives. Or rather, the lack of them.

How TCP Connections Actually Die

A TCP connection has three parties: your client, the server, and the network between them. If the network goes down for two minutes while you’re idle:

- Neither your client nor the server sends anything

- The routers in between silently forget about the connection

- When you type something and try to send, packets go nowhere

- The connection is dead, but both ends think it’s still alive

Keepalives solve this by periodically poking the other end: “You still there?” The other end responds and proves the connection still works.

Without keepalives, you don’t know a connection is dead until you try to use it.

Check Your Current Keepalive Settings

# System-wide TCP keepalive settings:cat /proc/sys/net/ipv4/tcp_keepalive_timecat /proc/sys/net/ipv4/tcp_keepalive_intvlcat /proc/sys/net/ipv4/tcp_keepalive_probes

# Default output:# tcp_keepalive_time: 7200 (seconds until first keepalive)# tcp_keepalive_intvl: 75 (seconds between keepalives)# tcp_keepalive_probes: 9 (how many probes before giving up)That 7200 is the killer. Two hours of idle time before even the first keepalive packet goes out. Perfect recipe for dead connections.

What Those Numbers Mean

tcp_keepalive_time: Wait this long before sending first keepalive (7200s = 2 hours)tcp_keepalive_intvl: Wait this long between keepalives (75s)tcp_keepalive_probes: Send this many before giving up (9 probes)

Math: 2 hours + (75s × 9) = 2 hours and 11 minutes until the connection is declared dead. Most firewalls kill idle connections way faster.

Tune Keepalives System-Wide

For a long-lived connection server or desktop that keeps SSH/databases alive:

# Make keepalives happen faster:sudo sysctl -w net.ipv4.tcp_keepalive_time=300sudo sysctl -w net.ipv4.tcp_keepalive_intvl=30sudo sysctl -w net.ipv4.tcp_keepalive_probes=5

# Permanent (survives reboot):sudo tee -a /etc/sysctl.conf << EOFnet.ipv4.tcp_keepalive_time=300net.ipv4.tcp_keepalive_intvl=30net.ipv4.tcp_keepalive_probes=5EOF

sudo sysctl -pWith these settings:

- First keepalive at 5 minutes

- Retry every 30 seconds

- Give up after 5 tries (2.5 minutes of failures)

- Total: Detect dead connections within 7-8 minutes

Much better than 2 hours.

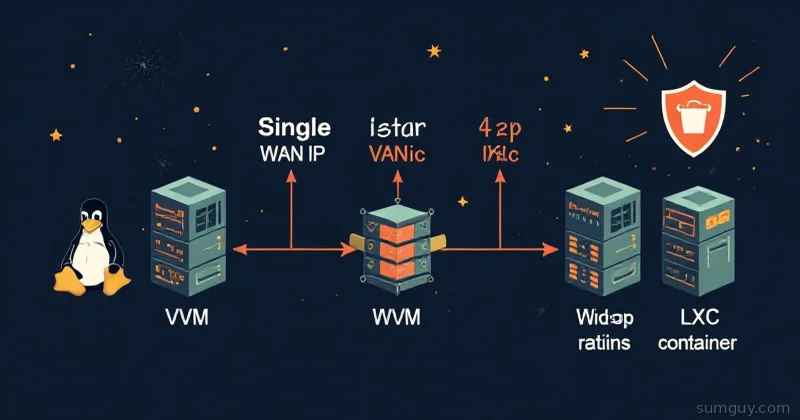

Per-Socket Keepalive Configuration

Different connections need different tuning. SSH clients should be aggressive. Database pools should be more conservative. Use socket options:

SSH Client (~/.ssh/config)

Host * ServerAliveInterval 60 ServerAliveCountMax 5This tells SSH: “Send a keepalive every 60 seconds, give up after 5 timeouts.” Dead connection detected within 5 minutes.

Database Connections (Python Example)

import socketimport psycopg2

conn = psycopg2.connect("dbname=mydb user=myuser")conn_socket = conn.fileno()

socket.setsockopt(socket.SOL_SOCKET, socket.SO_KEEPALIVE, 1)socket.setsockopt(socket.IPPROTO_TCP, socket.TCP_KEEPIDLE, 300)socket.setsockopt(socket.IPPROTO_TCP, socket.TCP_KEEPINTVL, 30)socket.setsockopt(socket.IPPROTO_TCP, socket.TCP_KEEPCNT, 5)Or in Node.js:

const net = require('net');const socket = net.createConnection({ host: 'database.example.com', port: 5432});

socket.setKeepAlive(true, 300 * 1000); // millisecondsNginx (Upstream Connections)

upstream backend { server backend.example.com:3000; keepalive 32;}

server { listen 80;

location / { proxy_pass http://backend; proxy_http_version 1.1; proxy_set_header Connection ""; proxy_connect_timeout 5s; proxy_read_timeout 300s; }}Check If Keepalive Is Enabled

For an existing connection (like an SSH session):

# Get the socket info:lsof -i :22 # Shows SSH connections

# Get the PID, then:cat /proc/[PID]/net/tcp | headOr use ss to show keepalive status:

ss -o state established '( dport = :ssh or sport = :ssh )'The output includes ka if keepalive is active.

Real-World Example: Failing Database Backups

# Long-running backup over flaky network:mysqldump -h remote.db.com --opt --all-databases > backup.sql

# Without keepalives, if network hiccups during the 2-hour dump:# Connection hangs, then disconnects. Backup loses 1.5 hours of progress.

# With keepalives every 5 minutes:# Network hiccup detected within 5 minutes.# Application retries and completes.

# Fix: Configure MySQL client keepalivesmysql -h remote.db.com --connect-timeout=10 \ --read-timeout=300 \ --write-timeout=300Or in my.cnf:

[client]connect_timeout=10read_timeout=300write_timeout=300Debugging Keepalive Issues

If connections still drop randomly after tuning keepalives:

# Check if keepalive is actually enabled on the socket:ss -o state established '( dport = :3306 or sport = :3306 )' | grep -i "ka"

# -o flag shows TCP info including keepalive status# If "ka" is missing, keepalive isn't working

# Check application-level timeouts too:# Many apps have their own timeout settings that override OS settings

# Example: MySQL client timeoutmysql -h localhost --read-timeout=300 --write-timeout=300 -e "SELECT 1;"Real Production Example: Elasticsearch Cluster

Elasticsearch nodes stop responding to each other because of firewall timeouts:

# In elasticsearch.yml:transport: tcp: keep_alive: true keep_alive_interval: 60s

network: host: 0.0.0.0Without this, firewall rules would drop idle cluster communication. Nodes would think other nodes were dead and trigger unnecessary re-elections.

Then in the OS:

# On each Elasticsearch machine:sudo sysctl -w net.ipv4.tcp_keepalive_time=300sudo sysctl -w net.ipv4.tcp_keepalive_intvl=30sudo sysctl -w net.ipv4.tcp_keepalive_probes=5Now nodes check every 5 minutes and know immediately when one goes down (instead of waiting 2 hours).

Firewall Considerations

Some firewalls drop idle connections regardless of keepalives. If you’re behind a carrier-grade NAT or aggressive firewall:

# Make keepalives happen VERY frequently:sudo sysctl -w net.ipv4.tcp_keepalive_time=60 # 1 minutesudo sysctl -w net.ipv4.tcp_keepalive_intvl=10 # 10 secondssudo sysctl -w net.ipv4.tcp_keepalive_probes=6 # 1 minute totalThis is aggressive but sometimes necessary behind restrictive firewalls. Your traffic overhead increases slightly, but zero dropped connections beats random timeouts.

For SSH over especially bad connections (satellite, metered networks):

# ~/.ssh/config:Host satellite-server ServerAliveInterval 30 ServerAliveCountMax 10That’s a keepalive every 30 seconds, giving up after 5 minutes. Terrible for battery but guarantees the connection stays alive.

The Bottom Line

- Default Linux keepalive time (2 hours) is terrible for anything real-world

- Network timeouts happen faster (usually 15-30 minutes for firewalls)

- Tune to 5-minute detection for long-lived connections

- Use socket options per-application for fine control

- SSH:

ServerAliveInterval 60(non-negotiable) - Databases: Enable keepalives with short intervals

- Proxies: Enable upstream keepalives

- Behind aggressive firewalls: Make keepalives happen every 30-60 seconds

Your 2 AM self will thank you when the backup completes instead of hanging silently.