The Problem With Dumb Automations

Zapier and n8n have made automation accessible to anyone who can drag a box across a screen, and that’s genuinely great. But most automations built this way are fragile in the same specific way: they work until the world doesn’t cooperate with your assumptions.

You build an email filter: if subject contains “invoice”, move to the billing folder. Works great for two weeks. Then a client sends an email with the subject “Re: Re: Re: Q3 meeting notes” that contains an attached PDF invoice, and it sits in your inbox forever.

The problem is that rule-based automation is essentially pattern matching dressed up in a nice GUI. It’s fast, it’s predictable, and it absolutely cannot handle ambiguity. The moment your input data stops conforming to the exact pattern you anticipated, the automation does something wrong — usually silently.

LLMs are the opposite. They’re slow (relatively), probabilistic, and not always right — but they handle ambiguity the way a human does. You can tell an LLM “figure out if this email needs a response today or if it can wait until next week” and it will actually do that, even for emails it’s never seen before.

The good news: you don’t have to choose. n8n lets you combine both. Use rule-based nodes for the fast, deterministic stuff. Drop in an LLM node for the parts that require judgment.

n8n in 30 Seconds

n8n is an open source workflow automation tool — think Zapier, but self-hostable, more powerful, and with a workflow editor that doesn’t make you want to cry. It connects to hundreds of services through built-in nodes and lets you write custom JavaScript or Python when the built-in nodes aren’t enough.

The free self-hosted version has no node limits. You own your data. It runs in Docker. That’s the pitch.

Running n8n + Ollama with Docker Compose

Before building any workflows, you need both services running. Here’s a compose file that runs n8n alongside Ollama:

version: "3.8"services: n8n: image: n8nio/n8n:latest restart: always ports: - "5678:5678" environment: - N8N_HOST=localhost - N8N_PORT=5678 - N8N_PROTOCOL=http - WEBHOOK_URL=http://localhost:5678/ - GENERIC_TIMEZONE=America/New_York volumes: - n8n_data:/home/node/.n8n

ollama: image: ollama/ollama:latest restart: always ports: - "11434:11434" volumes: - ollama_data:/root/.ollama # Uncomment below if you have a GPU: # deploy: # resources: # reservations: # devices: # - driver: nvidia # count: 1 # capabilities: [gpu]

volumes: n8n_data: ollama_data:After starting, pull a model into Ollama:

docker exec -it <ollama-container> ollama pull llama3.2# Or for a smaller, faster model:docker exec -it <ollama-container> ollama pull phi3.5Now n8n can reach Ollama at http://ollama:11434 (using the Docker service name).

How n8n Talks to Ollama

n8n has a built-in “Ollama” node in recent versions, but the HTTP Request node works just as well and gives you more control. Ollama exposes an OpenAI-compatible API at /v1/chat/completions, which means n8n’s built-in OpenAI node also works with a custom base URL.

Using the HTTP Request Node (Universal Method)

Add an HTTP Request node with these settings:

- Method: POST

- URL:

http://ollama:11434/api/generate - Body (JSON):

{ "model": "llama3.2", "prompt": "{{ $json.prompt }}", "stream": false}The response comes back as response in the JSON body. Access it with {{ $json.response }} in subsequent nodes.

Using the OpenAI-Compatible Endpoint

If you prefer the chat completions format (better for multi-turn conversation or system prompts):

- URL:

http://ollama:11434/v1/chat/completions - Body:

{ "model": "llama3.2", "messages": [ { "role": "system", "content": "You are a triage assistant. Classify emails as URGENT, NORMAL, or LOW. Respond with only the classification word." }, { "role": "user", "content": "{{ $json.emailBody }}" } ]}Practical Workflow 1: LLM Email Triage

This is the workflow that converts email chaos into calm. The idea: any email that hits your inbox gets classified by priority before you ever open it.

Workflow structure:

Gmail Trigger → Extract Email Body → HTTP Request (Ollama) → IF Node → Label/Move EmailStep by step:

- Gmail Trigger node — fires when a new email arrives in your inbox

- Set node — extract and clean the email body:

{{ $json.text.replace(/\n\n+/g, '\n') }} - HTTP Request node — send to Ollama with this prompt:

Classify this email into exactly one category: URGENT, NORMAL, or LOW.

URGENT = needs a response today, deadline within 24h, or server is on fireNORMAL = should respond within a week, standard business communicationLOW = newsletters, FYI threads, automated notifications

Email subject: {{ $json.subject }}Email body: {{ $json.snippet }}

Reply with only the category word. No explanation.- IF node — branch on the response: if contains “URGENT”, trigger a Slack notification

- Gmail node — add a label based on classification

The key to making this work reliably is the prompt design in step 3. Tell the LLM exactly what you want back and constrain the output format. “Reply with only the category word” is load-bearing — without it, you’ll get paragraphs of reasoning that your IF node can’t parse.

Practical Workflow 2: Webhook → LLM → Slack

A more general pattern: something sends a webhook to n8n (a GitHub comment, a Sentry alert, a form submission), the LLM processes and summarizes it, and the result goes to Slack.

Workflow structure:

Webhook → HTTP Request (Ollama) → SlackThe Ollama prompt for a Sentry error alert:

You are an alert summarizer. Summarize this error alert in 2-3 sentences max.Focus on: what broke, how many users affected, and whether it's likely a regression.Use plain language, no jargon.

Alert data:{{ JSON.stringify($json) }}The Slack message body:

:rotating_light: *New Alert*{{ $json.response }}

<{{ $json.url }}|View in Sentry>This is dramatically more useful than raw Sentry webhooks that dump JSON into Slack. A wall of stack trace is noise. Two sentences from an LLM is signal.

Practical Workflow 3: Document Summarization Pipeline

You drop PDFs or long documents into a folder (via Nextcloud, S3, or local filesystem), n8n picks them up, summarizes them with Ollama, and saves the summaries alongside the originals.

Workflow structure:

Watch Folder Trigger → Read File → HTTP Request (Ollama) → Write FileFor text-based documents, you can pass content directly. For PDFs, you’ll need a preprocessing step — either a Code node that calls a Python/JS PDF parser, or an external tool that converts PDF to text first.

The summarization prompt:

Summarize the following document in 3-5 bullet points.Focus on key decisions, action items, and important facts.Be concise — each bullet should be one sentence.

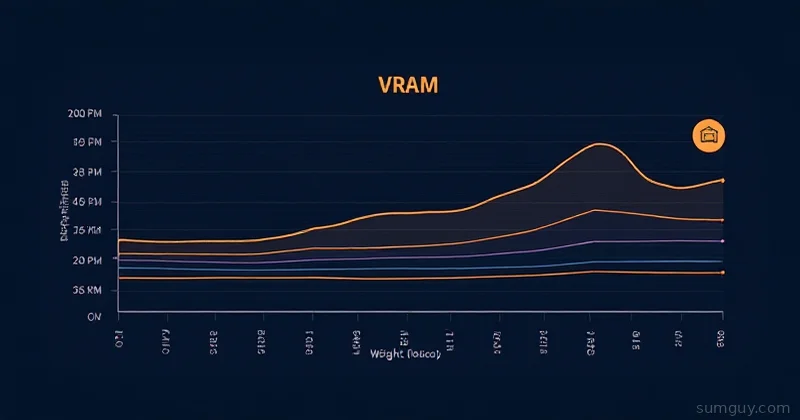

Document:{{ $json.fileContent.substring(0, 4000) }}Note the substring(0, 4000) — you need to chunk long documents for models with limited context windows. For a local llama3.2 model, stay under 4000 tokens to be safe. Larger models like llama3.1:70b can handle more, but they’re slower and require beefier hardware.

Prompt Design Tips for n8n Workflows

Getting reliable output from LLMs in automated workflows is different from chatting with them. You don’t have a human in the loop to catch weird outputs. Here’s what makes the difference:

Constrain the output format explicitly. “Reply with JSON only. No markdown. No explanation.” is better than hoping the model formats things correctly. Then validate the output in a Code node before passing it downstream.

Use temperature 0 for classification tasks. When calling Ollama’s API, you can set "temperature": 0 in your request body. This makes the model more deterministic — better for tasks where you need a consistent, predictable answer.

{ "model": "llama3.2", "prompt": "{{ $json.prompt }}", "options": { "temperature": 0 }, "stream": false}Add an error branch. LLMs occasionally return unexpected formats. Add an IF node after every LLM response that checks if the output is what you expected. If not, route to a fallback — either a default value or a Slack message saying “this automation needs human review.”

Log your prompts and responses. n8n doesn’t automatically persist execution data indefinitely. Add a simple Google Sheets or SQLite write node to log prompts, responses, and outcomes. You’ll thank yourself when something goes sideways and you need to debug why the LLM classified “server on fire” as LOW priority.

When to Use LLMs in Automations (and When Not To)

LLMs add latency, cost (even for local models — you’re paying in compute time), and unpredictability. Don’t reach for them when a regular node will do.

| Task | Use LLM? | Why |

|---|---|---|

| Email classification by sentiment/priority | Yes | Too ambiguous for rules |

| Summarizing long documents | Yes | Rules can’t summarize |

| Routing by subject keyword | No | Simple IF node is fine |

| Extracting a date from a structured field | No | Regex or JS is more reliable |

| Translating user feedback | Yes | Context-dependent |

| Checking if a file exists | No | Filesystem node |

| Writing a draft response | Yes | Creative/contextual task |

The pattern is: if you can write a rule for it that will work 99% of the time, write the rule. Bring in an LLM for the cases where no rule covers the full range of inputs.

Combining n8n’s reliable plumbing with Ollama’s flexible reasoning is one of the more underrated homelab setups right now. Your automations stop being brittle keyword matchers and start behaving like they have some understanding of what they’re actually doing. That’s a meaningful upgrade from “move email to folder if subject contains INVOICE.”