The VRAM Mystery

You fire up Ollama, load a model, run a few queries, then load a different model. Your GPU fills up. Then you load a third one and suddenly you’re swapping to CPU. What’s happening? Ollama doesn’t unload models between requests by default—it keeps them in VRAM.

This is actually intentional. A model sitting in GPU memory is fast. Reloading it from disk is slow. But if you’re juggling multiple models on limited hardware, this behavior gets painful fast.

TL;DR: Ollama holds loaded models in VRAM for 5 minutes after the last request. Override with

OLLAMA_KEEP_ALIVE=<duration>globally, or pass"keep_alive": <duration>per request. Use"keep_alive": 0to force-unload immediately. Inspect withollama ps(newer builds) ornvidia-smi -l 1live.Jump to: Keep-alive tuning · Force-unload via API · Context window cost · Memory pressure & quantization · ollama ps

Understanding Ollama’s Memory Model

Ollama holds loaded models in VRAM until they time out or you explicitly unload them. The key setting is OLLAMA_KEEP_ALIVE.

By default, a model stays loaded for 5 minutes after you stop querying it. After that, Ollama unloads it to free VRAM.

# Check what's currently loadedcurl http://localhost:11434/api/tags

# Response shows all models, but doesn't tell you VRAM usage directlyTo see actual GPU memory consumption, use your system tools:

# On NVIDIAnvidia-smi -l 1 # Refresh every 1 second

# On AMDrocm-smi -i 0 --json | jq '..*[] | select(.mem_used) | .mem_used'Watch nvidia-smi while you query a model. You’ll see the model’s weights loaded into VRAM, then sit there for 5 minutes.

Tuning Keep-Alive

Change how long Ollama keeps a model loaded with environment variables:

# Load model, keep it in VRAM for 30 secondsOLLAMA_KEEP_ALIVE=30s ollama serve

# Or set it permanently (Linux)echo 'OLLAMA_KEEP_ALIVE=2m' >> ~/.profilesource ~/.profileKeep-alive values:

30s— Aggressive unloading, good for single-model workflows2m— Reasonable middle ground, handles back-and-forth conversations0— Never unload (keep everything loaded until manual restart)

# Force immediate unload (pragmatically)# Stop Ollama, restart it (hard unload)pkill ollamasleep 2ollama serveChecking Loaded Models Programmatically

The API doesn’t expose VRAM usage, but you can infer it:

import requestsimport subprocessimport re

# Get list of loaded modelsresponse = requests.get('http://localhost:11434/api/tags')models = response.json()['models']

# Get NVIDIA VRAM usagevram_output = subprocess.check_output(['nvidia-smi', '--query-gpu=memory.used', '--format=csv,nounits,noheader']).decode()vram_used = int(vram_output.strip())

print(f"Models loaded: {[m['name'] for m in models]}")print(f"VRAM used: {vram_used} MB")The Hard Choice: Context Windows vs. Memory

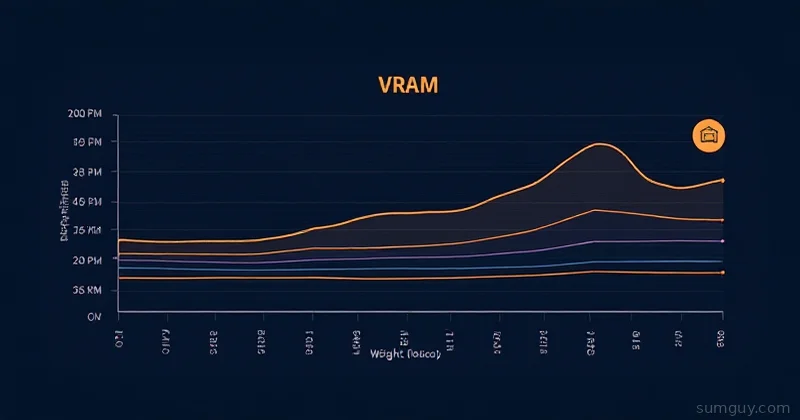

Larger context windows = more VRAM per model. A 7B model with 4K context uses ~6GB VRAM. The same model with 32K context? ~14GB.

If you’re maxing out VRAM, you have three options:

- Reduce context window per request — Use

num_ctxparameter - Switch to smaller quantization — Q3_K instead of Q5_K

- Use CPU offloading — Trade speed for space

# Reduce context window to 2Kcurl http://localhost:11434/api/generate \ -d '{ "model": "mistral", "prompt": "Why is memory hard?", "stream": false, "num_ctx": 2048 }'The Real Problem: Unplanned Persistence

Ollama’s default behavior assumes you’re running one model repeatedly. If you’re switching between models constantly — or running multiple models via a load balancer — you need to be explicit about unloading.

The clean way to force an unload is the keep_alive request parameter. Set it to 0 on any inference endpoint and the model unloads as soon as the request returns. You can also fire a no-op request with an empty prompt purely to trigger the unload:

# Force-unload a specific model right now — no waiting, no restartcurl http://localhost:11434/api/generate -d '{ "model": "mistral:7b-q4_k", "keep_alive": 0}'keep_alive works on /api/generate, /api/chat, and /api/embed. It accepts the same values as OLLAMA_KEEP_ALIVE — a duration string like "5m", a negative number for “never unload”, or 0 for “unload immediately”. Per-request beats global, so this overrides whatever the server default is.

Some other workarounds when you genuinely can’t change the request shape:

# Monitor and log what's loaded every minutewatch -n 60 'nvidia-smi | grep ollama'

# If you're running multiple instances, use separate portsOLLAMA_HOST=127.0.0.1:11434 ollama serve &OLLAMA_HOST=127.0.0.1:11435 ollama serve &

# Each instance manages its own VRAM independentlyMemory Pressure: What Happens When You Exceed VRAM

You will run out of VRAM eventually. When Ollama tries to load a model and there’s not enough space, here’s what happens:

- It writes the overflow to disk (slow path)

- Your system swap activates if available

- Or the kernel OOMs and kills processes

Watch for this in real time:

# Terminal 1: Monitor VRAM + swap in real-timewatch -n 1 'nvidia-smi --query-gpu=memory.used,memory.free --format=csv,nounits,noheader; free -h | grep Swap'

# Terminal 2: Load your model and start queryingcurl http://localhost:11434/api/generate -d '{ "model": "mistral:latest", "prompt": "Explain quantum computing in 2000 words", "stream": false}'If VRAM fills and swap starts climbing, you’re in pain. Queries slow down 10-100x.

The Quantization Solution

Smaller quantization = smaller model = less VRAM. Trade quality for space:

- Q8 (8-bit): ~90% of original quality, ~65% of VRAM

- Q6 (6-bit): ~85% of original quality, ~50% of VRAM

- Q4_K (4-bit): ~80% of original quality, ~25% of VRAM

- Q3_K (3-bit): ~70% of original quality, ~15% of VRAM

Example: A 13B model in Q8 uses ~8GB. Same model in Q4_K? ~2GB.

# Pull a smaller quantizationollama pull mistral:7b-q4_k

# Check available modelsollama listFor home lab setups, Q4_K is the sweet spot. You get reasonable quality without the VRAM debt.

Monitoring Loaded Models Over Time

Set up basic monitoring to catch creeping memory usage:

import requestsimport jsonfrom datetime import datetime

url = 'http://localhost:11434/api/tags'while True: try: resp = requests.get(url) models = [m['name'] for m in resp.json().get('models', [])] print(f"[{datetime.now().isoformat()}] Loaded: {', '.join(models) or 'none'}") except Exception as e: print(f"Error: {e}")

# Sleep 30 seconds before checking again import time time.sleep(30)Run this in a screen/tmux session and check periodically. Unload models hogging space by restarting Ollama if necessary.

Checking Currently Loaded Models (Newer Ollama)

Ollama 0.1.24+ added a /api/ps endpoint that shows exactly what’s loaded in memory right now:

curl http://localhost:11434/api/ps | jq '.models[] | {name, size_vram}'Output:

{ "name": "mistral:7b-q4_k", "size_vram": 4073415168}That size_vram is bytes. Divide by 1073741824 for GB. Much better than guessing from nvidia-smi.

Running Ollama as a Systemd Service

If you’re running Ollama on a server, configure OLLAMA_KEEP_ALIVE in the systemd unit:

sudo systemctl edit ollama[Service]Environment="OLLAMA_KEEP_ALIVE=1m"Environment="OLLAMA_MAX_LOADED_MODELS=2"sudo systemctl daemon-reloadsudo systemctl restart ollamaOLLAMA_MAX_LOADED_MODELS caps how many models stay resident simultaneously. Set it to match your available VRAM divided by your typical model size. Ollama has reshuffled environment-variable names between releases, so if the variable is rejected on your version, run ollama help serve and check the current list — but the per-request keep_alive: 0 trick above works everywhere.

Related Reading

- GPU Memory Math: How Much VRAM Do You Actually Need? — sizing models before you buy a card

- Running Gemma 4 Locally with Ollama — concrete walkthrough on a single GPU

- Ollama Multi-Model Setups — when you genuinely do want several models resident at once

- Ollama Advanced Model Management — Modelfiles, tagging, and pruning

Bottom Line

Ollama’s memory persistence is a feature, not a bug. It’s optimized for the happy path: single model, repeated queries. If you’re running multiple models, either embrace the 5-minute window, tune OLLAMA_KEEP_ALIVE to your workflow, or split models across separate Ollama instances. Use /api/ps to see exactly what’s loaded. Understanding quantization lets you fit more models in less space. Your 2 AM self will thank you for understanding this before production breaks.